Hi, I’m Peter. I currently work at the ODI (Open Data Institute) where I am Director of Public Policy. I will start with my usual warning, particularly for an audience where English is not the first language. Sometimes I speak too quietly and too fast and I often make bad jokes and obscure references. I’m bad like that. This is my last public talk for the ODI so I am even more likely to do that than normal. Please tell me off if you cannot follow what I am saying. I will stop and get better.

About the ODI and about me

The ODI is a not-for-profit that works with businesses and governments to help build an open and trustworthy ecosystem. The ODI believes in a world where data works for everyone. As simple to describe, and as hard to achieve, as that.

In that world data improves the lives of every person, not necessarily every business or every government. Some businesses and governments are deliberately building new monopolies or causing harm to people. Sometimes it is not possible to fix that behaviour by working with organisations, instead it needs other ways to change behaviour. I will talk about those later.

At the ODI I have been heading up the public policy function — I’ve been responsible for the ODI’s views on the role of data in our societies.

I am a technologist by background and I somehow stumbled into the world of public policy a few years ago. One of the things I have been focussed on in that time is making sure that public policy is informed by and tested in practical research and delivery (and vice versa, that delivery work aligns with policy thinking). Data, technology and people are always changing. A strong link between practice and policy helps make stuff useful.

I am here to talk about practical data ethics. I would like to start by talking about how we create value from data; why we need to change the behaviour of people and organisations that collect, share and use data; and finally to talk about some possible interventions to change behaviour — including practical data ethics.

Creating value from data

Value is created from data when people make decisions.

To maximise the decisions that can be made we need to create tools that meet the needs of different decision makers — for example a mapping app to help me find the building that we are in today, a bit of sales and customer analysis to help a business decide whether to invest in a new product, or a research project to help a government decide whether and where to build a new road.

To create this range of tools we need to make data as open as possible.

This needs stewards — the people who decide who can get access to data — to make it accessible in ways that creators can use. There are a number of reasons why they might do this but it is (hopefully!) always driven by the need to use the data to tackle a problem by making a decision.

The problems with data

Unfortunately there has been a rush to collect data, open up data, share data, or make more decisions using data without thinking about whether or not we should.

This is an ethics event so I am going to start by talking about harms. Rather than organisations making data work for people, they make it work against them.

Harm to individuals. People in the USA have been sent to prison based on decisions by a judge influenced by algorithms that could not be inspected or challenged. The algorithm was meant to reduce human mistakes and bias. Subsequent research has shown that the algorithm was “no more accurate or fair than predictions made by people with little or no criminal justice expertise”. It was probably less accurate than the person it replaced. It certainly wasn’t as accountable.

Harm to groups of people. These are often groups of people that are already disadvantaged.

The UK Government launched a new online service to check passport photos. It did this knowing that the service was more likely to fail to work for people with darker skin. To put it another way, the service was known to work better for white people than black people. Is that ethical? Should it be legal?

Meanwhile when the UK Government transferred the EU’s General Data Protection Regulation (GDPR) into UK legislation it put in place an exemption that reduce the protections in cases when government was using the data to enforce immigration controls. This follows the recent Windrush deportation scandal, which was partly built on unrealistic expectations of data availability and quality, and happened during the ongoing Brexit negotiations which could lead to 3 million EU citizens being at risk of deportation from the UK. A recent court case found that the data protection exemption was legal. But was it ethical?

Harm to groups of people is not always caused by personal data. The excellent book Group Privacy contains many examples. One that sticks in my head is from the South Sudanese Civil War. The Harvard Humanitarian Initiative published analysis created from satellite imagery to help people find and get aid to refugees. Unfortunately terrible human beings used the same analysis to find and attack those same refugees. The tools that the team had available had helped them think about mitigating the risk to individuals from the release of personal data, but not the threats to groups of people created by non-personal data.

And as a final example there has been damage to our democracies. The use of data in political advertising, to spread misinformation, or most famously in the Facebook/Cambridge Analytica debacle. Personally I do not think that the data collected by Cambridge Analytica had much effect, I reckon they sold snake oil, but the fear of it having had an effect is damage in and of itself.

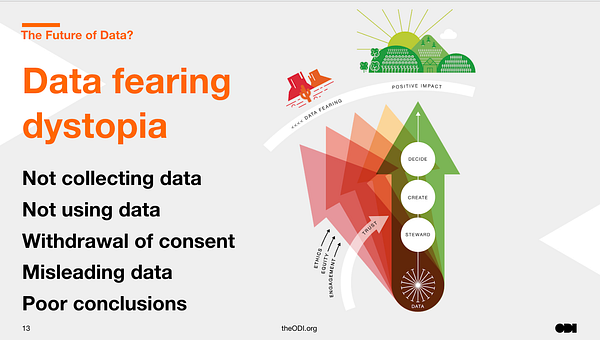

Left unchecked these harms will lead us to a data wasteland where organisations do not collect or use data, people withdraw consent and give misleading data, and as a result we will get poor conclusions when we try to make decisions based on data. It reduces the social and economic value that data could create.

But there is another type of harm. Where people and organisations collect data but use it only for their own purposes. They don’t make data work for everyone. They just make it work for themselves.

This is data hoarding. It is the attitude that “data is oil and I must control it”. Data is collected and used within a single organisation for too narrow a purpose.

A simple example comes from Google. In recent years Google have encouraged people to crowdsource data about wheelchair accessibility in cities so that it is easier for people in wheelchairs to move around. But the data is only available in Google Maps. The people who contributed the data would surely have wanted it made more widely available so that people in wheelchairs who used Apple Maps could find their way around, or that the data was made available to civil society and city authorities who might have been able to use it to improve wheelchair accessibility in cities. Instead the data is hoarded by Google to create a competitive advantage and bring in more customers

There are vast amounts of data locked up in data monopolies like Google, Facebook, Apple, and legacy organisations like big multinational corporates or national mapping agencies.

This leads to lost opportunities for innovation. Innovation that might have created better outcomes for people. As a result lots of people are looking at data as a competition issue at the moment.

It also leads to lost opportunities for understanding and tackling major societal challenges like understanding the impact of the internet and web on our democracies, how to cope with aging populations or increasing urbanisation, or how to prevent or reduce the impact of climate change. We need to be careful of vital data infrastructure becoming over-reliant on the private sector firm, and the excessive data collection caused by some business models, but just imagine the data held by governments and businesses that could be made safely available to help with these problems.

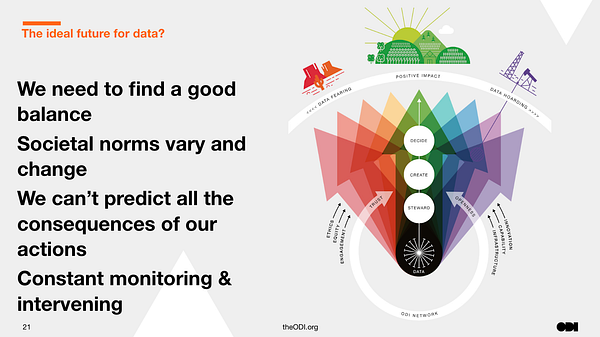

The challenge is finding a path between the data wasteland and data hoarding. If we make data too open and available then it causes harm, if we do not make it open enough then we lose benefits and concentrate power in monopolies.

We need to move from a world where people are rushing to collect, share and use data to one where societies have more strategic decision making about data. Where data is as well maintained and useful as other forms of infrastructure like road, rail and energy. Where there is better legislation, rules, guidelines, and professionalism.

In doing that we need to recognise that different societies will make different decisions about data. Just like they make different decisions about other forms of infrastructure. People’s needs and social norms vary.

As long as we stay within democratic norms and respect fundamental human rights then we should accept those differences. Many of my examples today are from high-income countries but personally I am excited to see what new futures emerge from the rest of the world. That would be a different talk though.

Anyway, moving to a better data future will require constant monitoring and intervening by a range of people and organisations. The ODI is one of the organisations doing that monitoring and intervening. The strategy for how and when we do it is on the website.

Possible interventions

It is essential to think about the ecosystem around data and to think about multiple points of intervention. To create a world where data works for everyone many forms of intervention are needed. I am going to touch on some before getting to practical data ethics.

Many people start by thinking that better choices by citizens and consumers can change the world. Consumer power is the answer. Consumers will pick services from organisations that cause less harm and create more benefits.

Many people say that consumers are happy with the current situation — why else would they be using these organisations and services? Unfortunately work in the US by the academics Nora A Draper and Joseph Turow on digital resignation and the trade-off fallacy, and our own recent piece of work on how people in the UK feel about data about us, shows that most people do care and want a different future but that they feel unable to get there.

One of the things that is lacking is choice for consumers. The previously mentioned work on digital competition, and things like interoperability and data portability, will help but it will take time. It is not going to reduce some of the harms we can all see right now.

Regulators can intervene. In the UK the Open Banking movement designed a framework which was adopted by the UK’s banking regulator. It tackled competition issues, by giving bank customers more control over data about them, and had measures to protect against harms. Rather than open banking being solely down to consumer choice a regulator approves who bank customers can share data with. I helped a bit both with the framework and the persuasion to get it adopted. The process has taken at least four years and is just starting to see changes that benefit people.

Another necessary point of intervention is legislation. This is essential and can radically change the behaviour of businesses and governments. But again legislation takes time. That is a feature, not a bug, of democracy. Democracy comes with debate and compromise. GDPR took six years from the first legislative proposal until it came into force.

For more immediate change there is existing legislation that could be used — for example anti-discrimination legislation and worker’s rights — but that legislation is likely to need updating as, like any legislation, we will learn that there are gaps and changes to be made.

More recently people are proposing the creation of new institutions, like data cooperatives and data trusts, to create more collective power and collaborative decision making. I led a team doing both policy thinking and practical experimentation on these institutions. There are other people doing similar work in other countries.

But these new institutions are in a research and development stage. We have to be realistic that it will take more time to determine if they are useful, where they are useful, and how to build and regulate them.

Practical data ethics

There are many other possible points of intervention but one important and often overlooked one is the people within the organisations that collect, share and use data. Which brings me (finally!) to practical data ethics.

In the USA there have been growing protests by tech workers against the decisions made by their employers, in the UK research by DotEveryone found that “significant numbers of highly skilled people are voting with their feet and leaving jobs they feel could have negative consequences for people and society.” Meanwhile consumers and citizens are saying that they do care and do want more ethical technology, organisations respond to that. The need to retain both workers and customers creates a need to change.

We should never forget that, as my friend Ellen Broad put it in her book, decision are made by humans. Humans decide to fund or stop projects, to buy technology, they make design and development decisions, and they decide whether and how to evaluate its outcomes.

These decisions are influenced by consumers, governments and regulators but they are also influenced by other things such as professional codes, training courses and organisational methodologies.

Many people think the best way to intervene here is to define ethical principles. But, when we look out at the world we can see that many many principles have been created in the last few years yet are they having any impact? A recent study into the US Association for Computing Machinery’s code said no. Meanwhile, how will people know which principles to apply in a given organisation, sector, or country? Who gets to define the principles? What right do they have? Who holds people accountable to them?

This does not mean that principles are useless, within an organisation they can demonstrate values and help create space for challenge, but we need to look at other techniques to make them more useful at the systemic level where the ODI is looking to intervene.

When Ellen Broad and Amanda Smith looked at this for the ODI a few years ago. They came to the conclusion that the most useful thing for the ODI for to do was something a bit more practical and a bit more like the tools that people already use.

So, inspired by the business model canvas, we worked together to create a Data Ethics Canvas.

In the two years since then various other people — like Fiona, Anna and Caley — have worked with me to iterate it and helped turn it into to what you can see today. Not all of those people work for the ODI. We have been iterating it based on feedback from our own users and audience too.

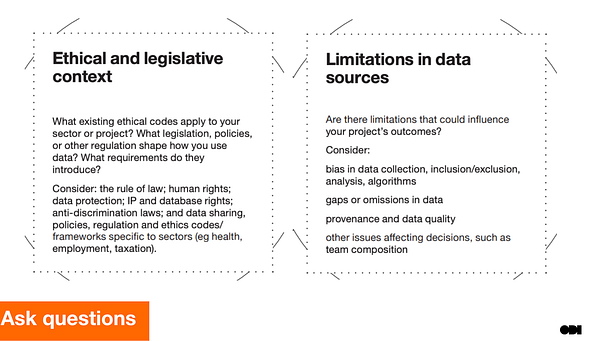

The canvas does not give easy answers it ask questions. It encourages people to take responsibility for coming up with their own answers in their own contexts. The questions are inspired by the problems we and other see.

It prompts people to think about their existing ethical and legislative context — perhaps they are already covered by health ethics or anti-discrimination legislation, or one of the many sets of AI and data ethics principles— and the limitations of data.

(By the way some principles, like those created by dataethics.eu, ask questions too)

The canvas prompts people to think of both possible positive and negative effects, but it encourages them to think more deeply about which groups of people win and lose.

The canvas is designed to be used by multi-disciplinary teams of people, not just individuals. We have seen it used by groups including lawyers, developers, programme managers, user researchers, policy analysts, designers and product managers. It encourages people in organisations to create space and time for debate, and then to make and act on decisions.

The canvas also encourages transparency and openness. That way people outside an organisation can see how it plans to use data, what benefits and risks are expected, and what mitigation plans are in place. It encourages people in organisations to listen to people who they might affect.

But is it having any effect?

I have used it in public training, private workshops and conversations with a range of organisations. I have seen it broaden people’s minds about the range of ethical issues that they should consider before making a decision. I have seen senior people in organisations try it in a few projects then go on to implement it in their standard project governance.

I have also seen individuals sneak it into a few projects within a large organisation with the goal of proving its value before talking more with their bosses. You normally don’t need permission to try a new methodology. Give it a go in your own organisations.

As well as providing paid training we openly publish a print-at-home version of the canvas and a detailed user guide on the ODI’s website so that anyone can use or remix it. The open licence on the canvas means that anyone has the ODI’s permission to do that.

It is hard to track usage of something that is openly published on the web but I know from our own research and surveys that hundreds of people in public, private and third sector organisations at local, national, and global levels are using it because of that decision to make it openly available.

Those people work in multiple sectors like academia, civil society, public service, health, finance, engineering. Some are in large corporates, some in small startups. People tell me that some organisations have stopped projects because of questions raised by the canvas. Others say that they have redesigned products and projects. Brilliant. It is causing some decisions to be made.

I can only share those stories vaguely, because I respect the confidence and privacy of those people.

One organisation, the UK Cooperative Group, have talked most about their use of the canvas. It forms part of their standard product development model. Because the canvas has an open licence they could adapt it to suit their own needs. Perfect. I hope some of the many, many others will share their stories too. I think it will be less scary than they might think.

I am always wary of over-confidence. At a place like the ODI we get listened to and the canvas could actually be making things worse. Is the effect overall positive and how big is it? Only time and more detailed evaluation will tell. But from my own checks I am reasonably confident that it is helping.

There are other people building similar tools that are useful in different contexts. I got my old team to use DotEveryone’s consequence scanning kit to look at data trusts — for that level of institutional change DotEveryone’s tool was a more useful approach. The team at the UK Digital Catapult have published a very useful paper categorising some of the tools that are available.

Obviously this approach to practical data ethics is only one type of intervention. Accountability — through organisational processes, professional codes, regulation and legislation is still very much needed. But practical data ethics can create some practical change now. If we can get people to be more open with their tales it should also inform policymakers on where the biggest problems are and what regulation and legislation is needed.

Building a better future for people with data will take quite a while. There are some obvious problems, some of which have obvious answers, but there also less obvious problems and no easy answers for all of the problems. We all have to keep monitoring and intervening at multiple points in the system.

We need to stay optimistic and believe that it is possible. I believe being optimistic is a political act that makes it more possible that we will build a world where data works for everyone.

Anyway, I have rambled on too long. It is time for less talking from stage and more talking with each other. Grab me if you want to chat or email me on peterkwells@gmail.com if you do not get a chance.