In some spare time, and with some spare cash for the fees…, I’ve been experimenting with some AI-enabled code generators recently.

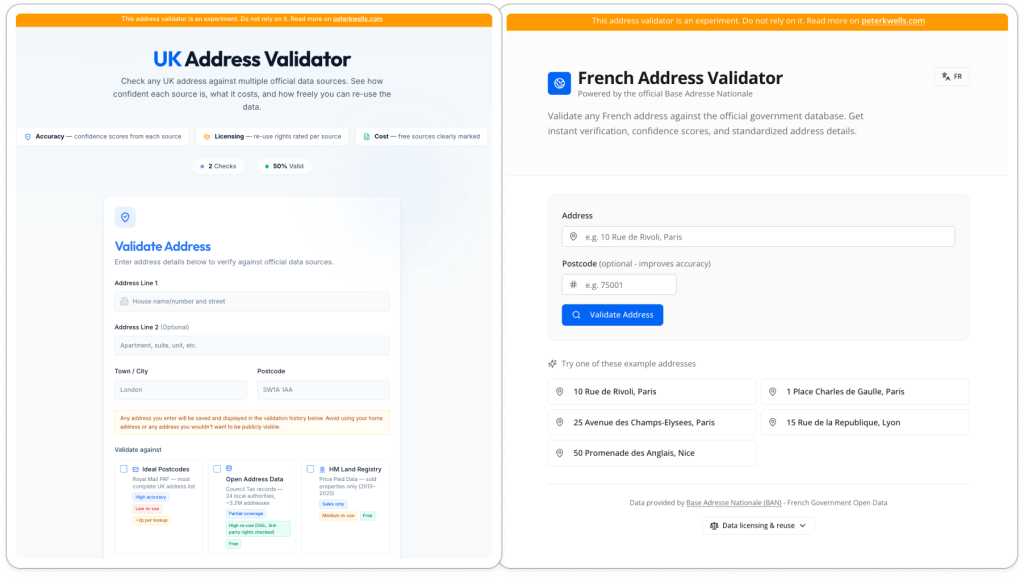

Here’s some things I learnt from using Replit to build two tools, a UK postal address validator, and a French postal address validator.

Both of the experimental tools I built are publicly available – and are on github, here and here – but do be aware that they are experiments. They are not guaranteed to be either reliably useful or legal. I’ve not looked at the code and have particular concerns over how the UK version handles copyright.

With those reservations in mind, here’s some thoughts I had after using Replit to build those two tools:

- the experience of building the tools was pretty easy, and at times astonishing,

- but Replit didn’t give me confidence that the tools would be reliable and

- it neither checked, or encouraged me to check, whether what I was doing was legal.

- It was a heck of a lot easier to build an address validator for France than the UK.

The experience of building the two apps was pretty easy, and at times astonishing

I am *not* a software engineer but I can do some coding, understand software engineering practices, and have some experience working with public sector data like addresses. Within that context the tool development experience was pretty straightforward and at times astonishing to my tiny mind.

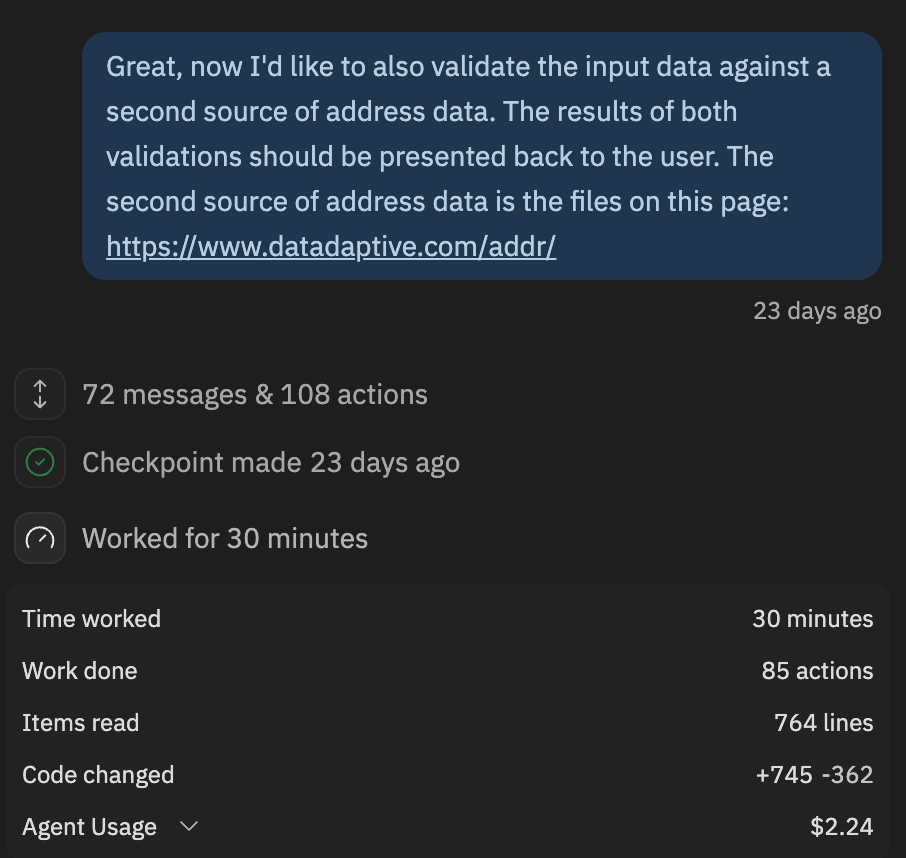

I could both use the chatbot-like interface to tweak small things – like content on the front-end – and large things – like working out how to download and make use of specific address data sources that it was pointed at. That latter bit felt astonishing.

At one point I needed to go and create an API key, but didn’t feel the need to go and look under the hood at the code or database.

But Replit didn’t give me confidence that the tools would be reliable

At the start of the build process for the UK address validator Replit said it had built something that worked, but it obviously did not. It took three cycles of me saying things like “that doesn’t work, look harder” before the tool started working and even then it had not done what it had been asked to do.

After that initial confusion Replit reported that it was running more tests, but it never showed the results. I had to specifically ask it to show test results before it reported that it was loading a testing skill and running some tests.

Despite this Replit had been happy to publish the app with no indication either to myself or to potential end users of the app that it might not be reliable.

Replit might give the feeling, but not the reality, of reliability. They should try to fix that.

And Replit neither checked, or encouraged me to check, whether what I was doing was legal

Replit struggled to understand or communicate data protection risks, which are important in France, or copyright risks, which are important in the UK.

It happily built functionality to collect and republish the addresses that people validated using the tool without telling me this should be made clearly visible to users. Eek. Don’t test it with your home address!

When I suggested Replit should use some public sector data containing addresses that was released under the UK’s OGL (Open Government Licence) it told me that using the data was fully permitted. This misses that the UK OGL contains exemptions both for personal data and for third party rights.

There have been multiple cases in the UK where people have been threatened with legal action over infringements of copyright when using address data. Replit had even suggested I use a service – https://getaddress.io – that recently closed because it lost a court case over third party rights in address data. Silly Replit.

To look deeper into copyright complications Replit was told to look at the UK Land Registry’s Price Paid data. This data is published under the UK OGL and has an explicit warning that the Royal Mail and Ordnance Survey reserve some rights.

This time Replit communicated the restrictions but suggested they could be worked around by showing the residential property price when validating the address. I don’t think the courts would agree with this interpretation.

After a bit of prompting I got Replit to start communicating the various data protection and copyright risks to potential users of my experiment, but it did leave me wondering.

- How many other Replit users are happily producing apps that unhappily break the law with risks to themselves and other people?

- Whether as well as the law potentially needing to become more machine-readable that these new coding tools need to get better at communicating legal requirements and risks to the people that use them?

- And should governments play a role in making that happen?

After all, the increased ease of using this wave of coding tools seems likely to increase the number of people who produce software, whether it be in tools like Replit or in real-time when using an AI agent. I suspect it will be increasingly important that AI-generated software and the humans that are responsible for it follow the law.

It was a lot easier to build a French address validator than a UK one

Finally, there was just one more thought. One that is likely to be obvious for anyone who works with UK geospatial data.

It took me several hours of to and fro to produce a useful looking UK address validator that did not completely rely on expensive licences and that could communicate to users the legal requirements that came with reusing the data. And I already knew quite a bit about how to do that.

It took me just 10 minutes to do the same for France, and that came with considerably less risk.

This is partly because the French government has already put in the operational and technical work to build an open address database and provide an API that tools like Replit can use. But it is also because the French government has put in the legal and financial work to ensure that they could provide this data for free and under an open licence which is more permissive than the UK’s OGL. The French government – and others – have done this for many other public sector datasets too.

If we are moving to a world where AI-enabled coding tools, like Replit, are more widely used then the work that countries like France have done could prove invaluable in helping many more people produce software tools that work, are reliable and are legally safe to use. The UK has some catching up to do.